「マイメイト」はAIを活用したFX

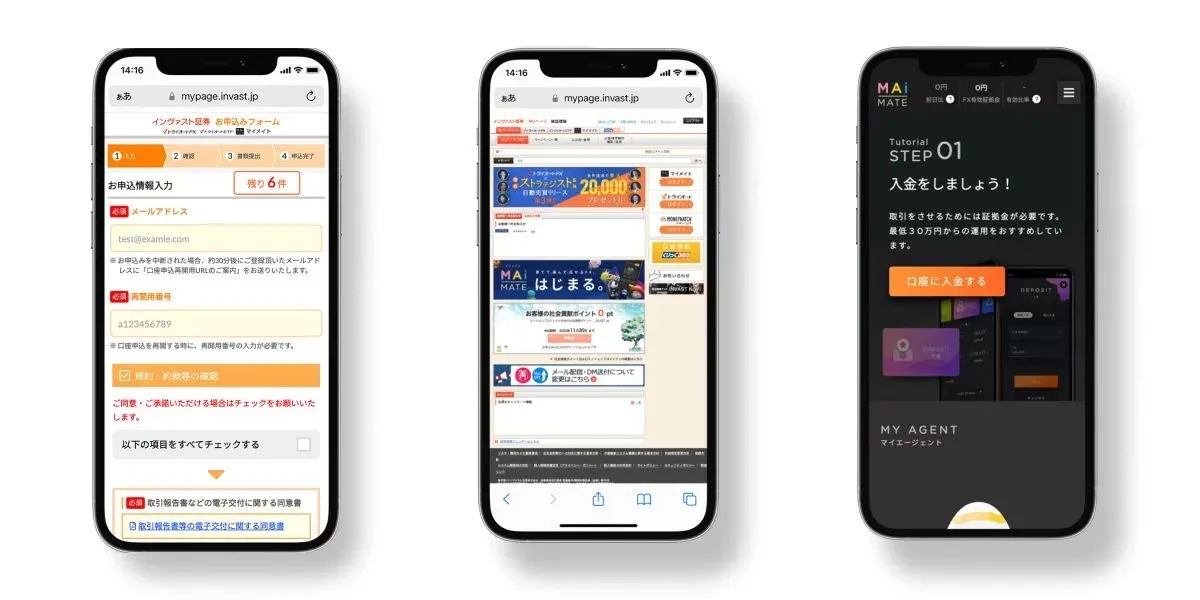

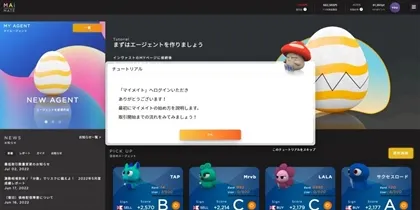

「マイメイト(MAiMATE)」は、AI(人工知能)を活用した新しいFXのサービスです。

マイメイトの大きな特徴は、「AIに売買などを任せられる」という点です。

AIが自動で「いつ買うか・いつ売るか」などを判断します。

こうした「取引を任せられるFXのサービス(FX自動売買)」は他にもありますが、マイメイトは「自動で学習し続ける」という点が今までのFX自動売買にはない特徴です。

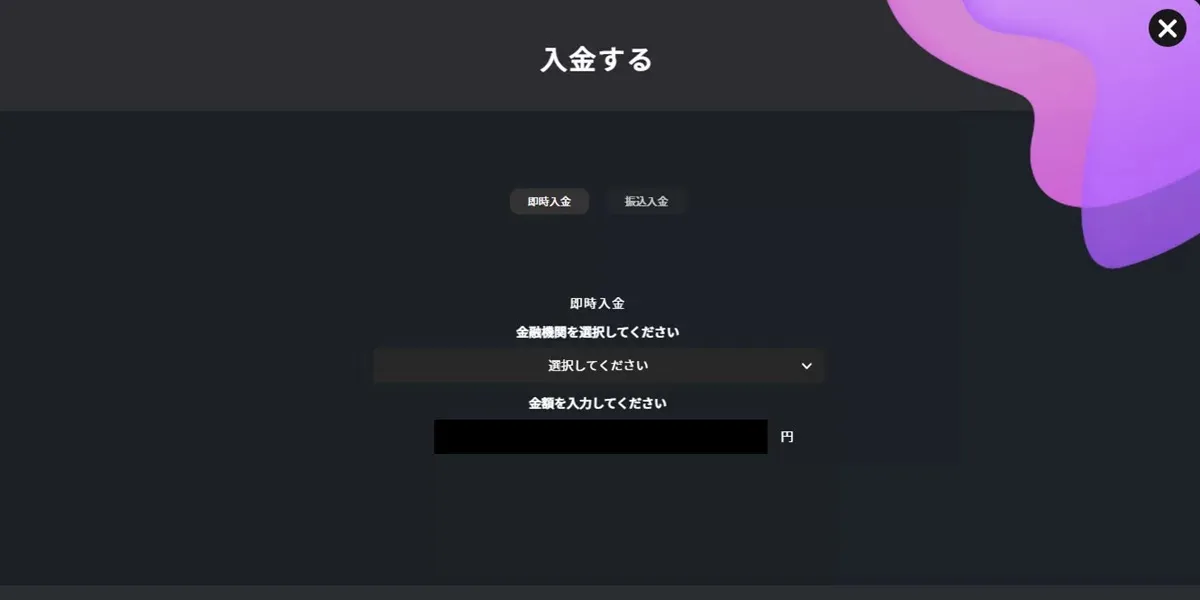

「マイメイト(MAiMATE)」は、AI(人工知能)を活用した新しいFXのサービスです。

マイメイトの大きな特徴は、「AIに売買などを任せられる」という点です。

AIが自動で「いつ買うか・いつ売るか」などを判断します。

こうした「取引を任せられるFXのサービス(FX自動売買)」は他にもありますが、

マイメイトは「自動で学習し続ける」という点が今までのFX自動売買にはない特徴です。